News & Blog

Steinwurf nominated for best Exhibition at IEEE 6G Summit

A Glorious week in Dresden for the IEEE 6G summit giving everyone a demo of RAMP

Steinwurf Talks Remote Operations at Oceanology

The Steinwurf Team was at Oceanology International this week talking to current and future clients about how our latest product - RAMP

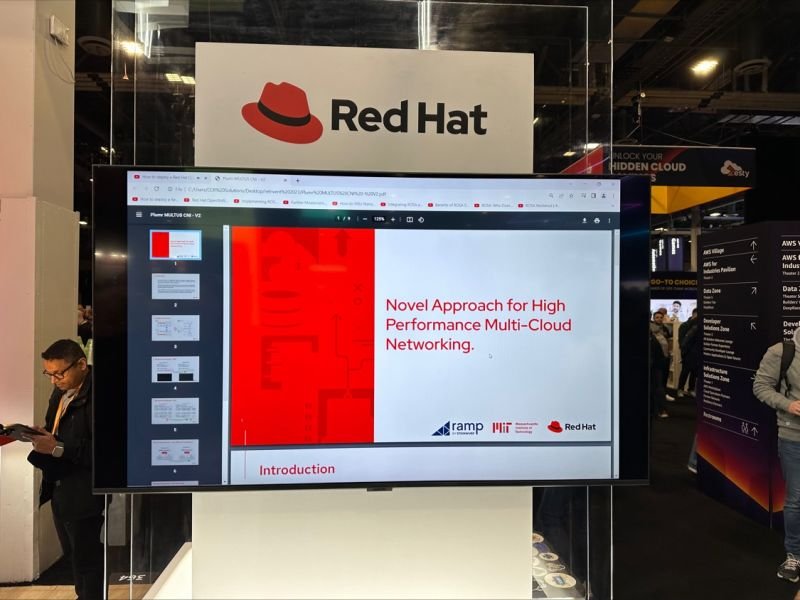

Ramp demo at MWC24 with Red Hat

The Steinwurf team is in Barcelona for MWC24 with a demo of our latest product RAMP at the Red Hat booth.

Collaboration With Red Hat improving throughput 100x and jitter 500x

This article from Medium by Fatih Nar shows how multi-cloud networking with network coding by using Steinwurf’s Ramp leads to an improvement of x100 throughput and x500 jitter using Ramp over kubernetes

Red Hat, Steinwurf and MIT demo at AWS Reinvent 2023

Steinwurf’s Ramp software was featured in a Red Hat demo this week at AWS Reinvent

Nvidia GeForce Now Demo with Steinwurf’s Rely

When using GeForce Now, if we turn our software (Rely) on over a poor network smooth gameplay resumes, and you can go on streaming from a machine in the cloud, none the wiser.